As organizations move toward CMMC compliance and align with NIST SP 800-171 requirements, the use of artificial intelligence (AI) tools is rapidly increasing, especially among administrators and developers.

While AI improves efficiency and accelerates workflows, it also introduces new cybersecurity risks that many organizations are not fully addressing.

In a CMMC Level 2 environment, where the protection of Controlled Unclassified Information (CUI) is critical, the use of AI must be carefully governed.

The Growing Use of AI in Regulated Environments

AI tools are now commonly used for:

- Troubleshooting technical issues

- Generating or reviewing code

- Analyzing configurations

- Automating operational tasks

However, in many cases, these tools are used without clearly defined policies or restrictions, increasing the risk of data exposure and non-compliance.

This is particularly concerning for defense contractors and organizations handling CUI, where strict controls are required.

Understanding the Risk: CUI Exposure Through AI Tools

One of the most significant risks in using AI tools is the unintentional exposure of sensitive data.

It’s important to recognize that CUI is not limited to documents. It can include:

- Source code

- System configurations

- Architecture diagrams

- Troubleshooting and diagnostic data

When users copy and paste this type of information into external AI tools, they may unknowingly expose sensitive or regulated data.

From a cybersecurity compliance perspective, this creates a major concern.

Data Leaving the Controlled Boundary

Under CMMC and NIST 800-171, organizations are required to maintain control over systems that process and store CUI.

When data is entered into an external AI platform:

- It may leave the organization’s authorized system boundary

- It may be stored or processed in uncontrolled environments

- It may be retained or reused without visibility

This introduces data leakage risks and challenges compliance with key security controls.

Once data leaves the controlled boundary, organizations lose:

- Visibility

- Control

- Assurance of proper handling

Are Internal AI Tools Safe?

Some organizations implement internal AI solutions to reduce risk.

While this can support secure AI usage, it does not eliminate compliance concerns.

If internal AI tools are not properly governed, they can still:

- Allow unauthorized access to CUI

- Process data in non-compliant ways

- Lack proper logging, monitoring, or audit controls

Without proper configuration and oversight, even internal tools can create compliance gaps.

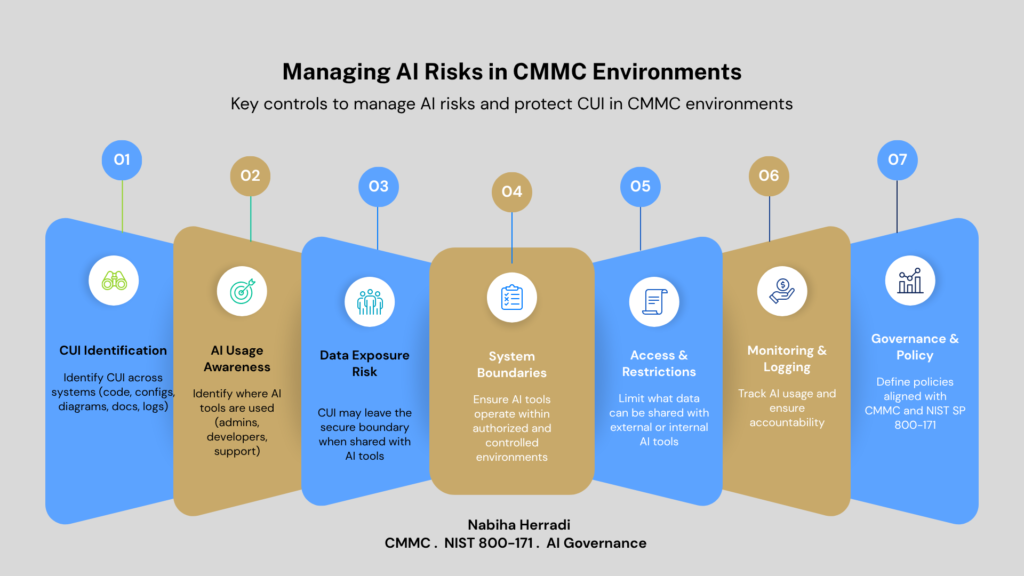

AI Governance in a CMMC Environment

The solution is not to avoid AI, it is to implement strong AI governance and cybersecurity controls.

Organizations should establish:

1. Clear AI Usage Policies

Define what is allowed and prohibited when using AI tools, especially regarding CUI and sensitive data.

2. Data Handling Restrictions

Prevent users from sharing regulated data with unauthorized AI platforms.

3. Access Control and Configuration

Ensure AI tools are configured in alignment with CMMC and NIST 800-171 security requirements.

4. Monitoring and Auditing

Track how AI tools are used and ensure accountability.

5. Security Awareness and Training

Educate users—especially admins and developers, on AI security risks and compliance responsibilities.

Balancing Innovation and Compliance

AI is becoming a critical part of modern operations, and organizations should leverage it to remain competitive.

However, in regulated environments, innovation must align with compliance.

For organizations pursuing:

- CMMC Level 2 certification

- NIST 800-171 compliance

- CUI protection strategies

AI usage must be implemented within defined security boundaries.

Final Thoughts

The use of AI in cybersecurity and compliance environments is inevitable, but it must be controlled.

Organizations that fail to address AI-related risks may face:

- Data exposure incidents

- Compliance failures

- Increased audit findings

By implementing AI governance frameworks, clear policies, and technical controls, organizations can safely adopt AI while maintaining compliance.

It’s not about restricting AI, it’s about using it securely, strategically, and within a compliant framework.

Need Help Assessing AI Risks in Your CMMC Environment?

If your organization is preparing for CMMC and evaluating how AI tools impact your compliance posture, I help teams identify gaps, define governance controls, and align with NIST SP 800-171 requirements